with AMATEUR BOTANIST

a video by KOTRYNA ULA KILIULYTE

Oh, there ain’t nobody here but us chickens

There ain’t nobody here at all

So quiet yourself and stop that fuss

There ain’t nobody here but us

Kindly point the gun the other way

And hobble, hobble, hobble, hobble, off and hit the hay

Hey, hey bossman, what do you say?

It’s easy pickins, there ain’t nobody here but us chickens.

~ From “Ain’t Nobody Here but Us Chickens,” by Alex Kramer and Joan Whitney, recorded by Louis Jordan and his Tympany Five, 1946. Based on a popular joke about a Black man in a hen house, as seen in Everybody’s Magazine, 1908

Every story I’m about to tell you, I bring up too often. It’s like a graveyard of horses that I’ve finally stopped beating and laid to rest, only to watch them come back to life every spring. They sprout wildflowers from their coats and clomp the earth and pull up grass with their lips like they’d never stopped.

The first scene occurred when I was in college—white, twenty, female, there on scholarship. I mention the scholarship because when you’re on scholarship at an elite school, it’s something you think about every day, every time you walk into a classroom: you wonder if everyone knows, if there’s something about you that doesn’t belong. I mention the whiteness because it helped me pass.

I had chosen to study English and biology, and it quickly became clear that such crossbreeding mischief was frowned upon. My English professors blinked at me when I would bring up the sciences, while my biology professors waved away my artsy, off-topic remarks like mosquitoes. When I later learned the term ‘imposter syndrome’, these were the earliest memories that sprung to mind. One day towards the end of a semester, my ecology professor marked me down half a grade for a research presentation. A solitary note on his grading form read, “Joke.”

When I asked what he meant, he cited a crack I’d made mid-presentation (a fairly base joke, a pun—I’m not proud and I won’t repeat it). “Oh,” I said. “Sorry. I was just trying to, you know, keep everyone awake.” Lighten the mood in an otherwise dreary week of student lectures. That’s when he uttered a phrase that has remained etched on my psyche: “There is no place for humor in a scientific presentation.”

Over a decade later, as I make my living as a humorist and science writer, I replay that encounter every day. Namely, I thank the man who threw down the gauntlet that slap-started my career trajectory. His words lit in me a rebellious incredulity: I was going to prove him wrong. But I also felt conflicted. My professor, a man of science, had given me an absolute. Another word for an absolute is a “fact.” Who was I to question him?

At the same time… Isn’t “incredulous” just another word for “skeptical”? And isn’t skepticism the very basis for science?

Science is both a method for arriving at understanding and the aggregate body of knowledge achieved through use of a tool. If skepticism is one aspect of that tool, it stands to reason we’re allowed to be skeptical of one of its key products, scientific fact. But while facts and answers are the business of science, absolutes and the opposition to questions feel more like functions of power than of learning. Research questions, yes; questioning authority, no?

As I struck out on my own, I found myself seeking out scientists who seemed to embrace questions and spurn absolutes. Some of the greatest scientists of our time seemed to fit this bill. At the risk of sounding like an inspirational college dorm poster, I’ll brazenly quote Albert Einstein, who said: “The important thing is not to stop questioning.” And then there’s this televised speech by Richard Feynman, theoretical physicist and science communicator, beloved among scientists for his playful intolerance of unscientific questions:

You see, one thing is, I can live with doubt, and uncertainty, and not knowing. I think it’s much more interesting to live not knowing than to have answers which might be wrong. I have approximate answers and possible beliefs and different degrees of certainty about different things. But I’m not absolutely sure of anything, and there are many things I don’t know anything about. . . . But I don’t have to know an answer. I don’t feel frightened by not knowing things, by being lost in the mysterious universe without having any purpose, which is the way it really is, as far as I can tell—possibly. It doesn’t frighten me.

Doubt, uncertainty, and not knowing don’t frighten Richard Feynman. Is that because he’s at the top of his field? Or is that how he got to be at the top of his field? I imagined myself on television with undergraduate science credentials, bravely, if falsely, proclaiming to the BBC cameras that I, too, was unafraid of not knowing things.

Something wasn’t adding up. If science claims ownership of the ultimate pursuit of knowledge, what harm does it do to bring up physics in a biology course? Or literature? Or a joke now and again? Someone, somewhere in science was afraid of something. What do scientists—what does science as a whole—have to be afraid of?

WHO’S ON FIRST

There’s a trope among comedians that you should be able to joke about anything. Or rather, there shouldn’t be anything you’re not able to joke about. The conclusion I prefer is that you can joke about anything, but it has to be funny. Painful issues like oppression, illness, violence—they shouldn’t be off limits as a rule, but if you’re gonna go there, you better be funny. Hell, comedy is supposed to be how we deal with pain: “Tragedy plus time is comedy” goes the saying, semi-attributed to famous white comedy guy Steve Allen, but it’s been popular in comedy circles so long. Who knows who said it first? It keeps getting said because it’s true. The horse keeps coming back to life because it’s funny because it’s true. It’s fertile ground. Rich for tilling. Maybe it’s rich because of the all the dead horses but—I kid, I kid.

The scientific equivalent of the choice to not make a joke is for a scientist to choose not to keep prodding. To be satisfied with an outcome or a finding, full stop. But this is why scientific papers have sections of Recommendations for Further Research. And, for that matter, Limitations—that is, what limitations did the study face that might have affected the resultant findings. To openly share these limitations, that’s good science. To be comfortable in the not knowing—even excited by it, that’s good science. Perhaps it’s most useful to remind ourselves there is good science and bad science, just like there is comedy that’s funny and comedy that’s not.

What offends me much more than comedy about serious subjects are serious subjects where comedy is not allowed. This is usually a situation in which someone elbows me and hisses, “That’s not funny!” Church, maybe. A funeral. TSA security checkpoints. And science, apparently. Conclusion? The folks who most often decry comedy are the folks who have the most to lose. Sometimes, this is fair: my mother doesn’t like me to joke about death because she doesn’t want me to die. But sometimes the people who have the most to lose are the people who have the most, period. More directly: arbiters of what is funny and what isn’t are often people with the most privilege. Because comedy is often a weapon with which the disenfranchised make their voices heard. There’s another phrase in the comedy world, among comedians who also care about their fellow humans: “Punch up.” It refers to the fact that if comedians must make a punchline of someone, they should make a punchline of the person with the highest status, or higher status, at least, than themselves. Comedy that punches down only stands to kick the members of society who are already down. Comedy that punches up stands a chance to shake things up in the higher strata of society. Here again, the solution isn’t to avoid serious subjects, but to remember that the funniest subject isn’t the subject but the king.

So perhaps it’s no surprise that comedy got no love among the architects of Western civilization. Tragedies were apparently much more fashionable than comedies in Greece and attracted the largest audiences and best actors. Aristotle once said that comedic theatre was “not an object of attention.” Aristotle was also, however, employed by the emperor. If satirists of ancient Greece were doing their job, they were definitely taking shots at Aristotle. He worked at the behest of the King of Macedonia, after all. He literally tutored Alexander. The ‘Great’ one.

Aristotle the Humorless is well-known as a philosopher whose ideas helped lay the groundwork for modern democracy. But he is also revered as one of the earliest figures in Western science. It wasn’t called science then—not until the 1900s. In his time, such work was called “natural philosophy.” Literally: Aristotle was a philosopher, so when he went outside and looked at nature, he applied his personal philosophies concerning logic to the process of observing nature, and so the groundwork for modern science began to fall into place. Over time, other Western white guys like Plato, Frances Bacon, and William Whewell built upon Aristotle’s work, and from their heads burst science, fully formed. I kid, but the basic tenets of their work have remained intact ever since. This is the Scientific Method. The fruit of the labors of these Great white minds is, according to the accepted definition, Science.

If you’re waiting for the punchline here and trying to figure out if it’s coming from above or below: there isn’t one. It’s just a fact. Science is the work of humans. Specifically, science is the work of white, Western, mostly wealthy humans. Western science is science. But if something about that feels funny, it should.

Take for instance Christopher Columbus (speaking of dead horses). He should be a joke, by now. It’s laughable: the idea that Christopher Columbus ‘discovered’ America, when there were already people living here. This scene sounds like the set up for an old vaudeville joke: some guy proudly walks into a room full of people and calls over his shoulder: “There’s nobody home!” while the people whose home he’s just busted into stand around looking at one another, like: “Who is this joker—what am I, chopped liver?”

I don’t say this make light of a situation that ultimately led to genocide. I won’t insult us all by dropping that “I laugh because I must not cry” quote by Abraham Lincoln, aka the Great Emancipator, aka the US President responsible for the largest mass execution of indigenous Americans (which was his job, but even so). To me, that should be obvious: “There’s nobody home,” when there clearly is. That’s laughable. Sinister. And therefore, by necessity, laughable.

The notion that SCIENCE is in fact Western science isn’t “Funny Ha Ha,” but it should be a little unsettling. The scenario calls to mind the film trope where police investigators, in hot pursuit of a fugitive (our hero), run their utility lights around a seemingly empty room and exeunt, calling to offscreen colleagues, “There’s nothing here, boss,” before the camera tilts to the ceiling, where our hero sweats, chimney-sweep braced against the corners of the room and mutters, “They never look up.”

WAYS OF NO-ING

I have, until this point, diligently avoided making the pun about a fallow field. Like. You know. The field of science.

But this is precisely the subject of the work of Robin Wall Kimmerer, a member of the Citizen Potawatomi Nation and plant ecologist in the Western tradition. The assumption underlying the concept of ‘fallow’ is that fallowness is inevitable. That the only method by which to farm a plot of land is the method that will eventually leave it void of nutrients and in need of rest. Kids of the twenty-first century might call this designed obsolescence. But it’s all in your technique.

In her now-famous book, Braiding Sweetgrass: Indigenous Wisdom, Scientific Knowledge, and the Teaching of Plants, Kimmerer recounts an experiment she conducted related to the indigenous practices of harvesting just part of the sweetgrass yield, which, she proves, makes for better yields the following year. But this wasn’t her experiment. The outcome of better yields had been proven year in, year out, by her community for thousands of years. The subject of her experiment was in fact her colleagues in Western science. She wanted to see what it would take to convince them of the scientific truths embedded in traditional wisdom, and she hypothesized that they would only be able to recognize her findings if she translated traditional wisdom into jargon. (She even lays out this chapter in the form of a scientific paper.)

Her hypothesis held up. One colleague even “retracted his initial criticism that this research would ‘add nothing new to science.’” Kimmerer won them over with what she calls the “language of mechanism and objectification”—talking about removing 50% of biomass and population density. Even words like “vigor” and “mortality” worked in her favor, which should feel a little funny. These are words you might find in an English sonnet. But also they have established scientific meanings. That’s Western science for you.

Luckily for humans, the past millennium has given rise to a few new theoretical frameworks. There’s a phrase, Ways of Knowing, that’s come into common usage in a variety of fields that deal in the intersection of science and culture, including medicine, mathematics, anthropology, museum studies, and even science education. Ways of Knowing refers to the tools by which humans come to understand the natural world, and it includes within it: science. Just like ‘science’ here is a term that defines both the tool (science; ways) and the resultant body of knowledge (science; knowing). Unlike science, this framework allows for vehicles for understanding that have origins beyond the realm of Western/white/colonialist history. Science as a way of knowing. Not all ways of knowing are science. Math, for instance, is another way of knowing. So is an experience one has, something they see with their own eyes. So is a story passed down through a hundred generations.

In Kimmerer’s scenario, the indigenous way of knowing is the hero, chimney sweeping on the ceiling. Western science is the police officers who didn’t think to ‘look up’ and concluded there was nothing there to find. The false assumption here is that the entire universe is only where the flashlights fall. They didn’t think to look up—therefore, nothing up exists. It’s laughable. It’s concerning.

There’s a 1995 film called Dead Man, starring Johnny Depp, a white man, and Gary Farmer, a member of the Cayuga tribe. Depp plays a white man who coincidentally shares a name with the poet and artist William Blake, and Farmer plays an indigenous man nicknamed Nobody. Farmer inspired the character and worked with writer and director Jim Jarmusch, a white man, to develop it in a way that Farmer saw as consistent with the culture of his people. The characters’ names work well as a vaudeville-style comic device: white guy asks William Blake who he’s traveling with, William Blake replies, “Nobody.” Or consider this scene (which contains spoilers):

BIG GEORGE. [Aiming his gun at Blake] Well goddamn it, I guess nobody gets you.

[Nobody emerges from woods and slits Big George’s throat]

WILLIAM BLAKE. Nobody!

Beyond being a good bit, it’s also a useful device for reflection on the notion of ‘wisdom,’ and who gets to have it. Throughout the film, Nobody recites the actual poetry of actual William Blake, and he is the only person in the film, including William Blake, who has heard of William Blake. Farmer said in a 1996 interview, “Like Nobody, it took me a long time to get past all the things society laid on me and reconnect with who I really am.” Once you start looking up, digging down, and knocking on the walls of dominant, Western/white/colonized institutions, you find that whitewash isn’t just superficial. It hides serious infrastructure problems that should really be addressed.

I saw a meme recently (and do let me know if you find out who made it, it’s now lost to the internet, like the sands of time): “Just because white people couldn’t do it doesn’t mean it was aliens.” Western science is around 2500 years old. The Mayans, on the other hand, were studying astronomy for four thousand years before Spanish colonialists even showed up. Most Westerners need to be reminded that the basis for modern math came from the Middle East, but even fewer of us have heard of a Muslim physician named Abu Qasim Khalaf Ibn Abbas Al Zahrawi (or Albucasis or Zahravius, as he was known in the West), who was the first to invent a trove of modern surgical practices, including the scalpel, surgical and obstetric forceps, and treatments for skull fractures that are still used today. Millenia-old traditional Chinese medicine still earns criticism from Western scientists because of its use of the rivers of China as an illustrative tool for the movement of energy through the body—but in 2015, practitioner Tu Youyou earned a Nobel Prize in medicine for her contribution to an effective malaria treatment. Before working with a team of Western-tradition scientists, traditional Chinese medicine was Tu’s way of knowing. Then, the two disciplines converged their ways, and we as humanity are one step closer to curing malaria, worldwide.

These are just a fraction of the cautionary tales that lie at the heart of Eurocentric scientific bias. At worst, Western science paints non-Western knowledge as dangerous, mysterious, and other—literally alien. At best, non-Western knowledge is the equivalent of “There’s nothing/nobody here.” But which is it? Is Western science the guy on the ceiling or the guy getting eaten by the guy on the ceiling? Either way, it’s high time that we make the ceiling part of the purview. Or, in the more acceptable words of Albert Einstein: “It is important for the common good to foster individuality: for only the individual can produce the new ideas which the community needs for its continuous improvement and requirements—indeed, to avoid sterility and petrification.”

There are plenty of moments in scientific history when the ‘accepted’ wisdom was wrong. Every time an animal was labeled as being ‘worthless’ to an ecosystem, for instance—this happened with mosquitos at one point, jellyfish another. This is as laughable as Columbus because the thing about ecosystems is that they’re systems: the system uses all of the parts because the system is all of the parts. Then there’s the mystery strings of DNA once called ‘junk’ DNA. At first, when scientists couldn’t match certain base pairs to any known genetic function, they labeled them as ‘junk’: extraneous, vestigial. But as it turns out, the DNA in those ‘junk’ piles are what provide DNA the flexibility to evolve.

Take for instance something as seemingly basic as the definition of life. The subject is notorious among scientists for being hard to pin down. As Steven A. Benner, an expert in the study of “origin of life,” stated in a 2010 article: “The question is hardly new, nor is the recognition of its difficulty. Also not new is a certain imprecision in the language used to address this question and therefore an imprecision in the consequent ideas.”

Daniel Koshland recently provided an anecdote that illustrates this imprecision. As president of the American Association for the Advancement of Science (which publishes the prestigious journal Science), Koshland recounted his own experience with a committee that was charged to generate a definition of life:

What is the definition of life? I remember a conference of the scientific elite that sought to answer that question. Is an enzyme alive? Is a virus alive? Is a cell alive? After many hours of launching promising balloons that defined life in a sentence, followed by equally conclusive punctures of these balloons, a solution seemed at hand: “The ability to reproduce—that is the essential characteristic of life” said one statesman of science. Everyone nodded in agreement that the essentials of a life was the ability to reproduce, until one small voice was heard. “Then one rabbit is dead. Two rabbits—a male and female—are alive but either one alone is dead.” At that point, we all became convinced that although everyone knows what life is, there is no simple definition of life.

The imprecise use of language is manifest. The ‘elite’ have confused the concept of ‘being alive’ with the concept of ‘life.’ This is not simply the mistaking an adjective for a noun. Rather, it represents the conflation of a part of a system with its whole. Parts of a living system might themselves be alive (a cell in our finger may be ‘alive,’ as might a fertilized ovum in utero). But those living parts need not be coextensive with a living system and need not represent life. Using language precisely, one rabbit may be alive even though he or she is not life.

Besides the intrigue and complexity of defining life, the encouraging thing here is that there is evidence that science is examining itself. According to Benner’s article, it became necessary for science to look at science. And at scientists themselves.

I’ll never have to tell the “no place for humor” story again, because a new scene has since occurred that has irreversibly re-colored the memory. About a month ago, I was invited back to my undergraduate alma mater’s biology department. The same department in which I felt like an embarrassment, an attitudinal pariah for cracking wise and asking ‘off topic,’ hand-wavy questions about cross-disciplinarity among fields.

The invitation made me feel like a prodigal son, but still, I was nervous. Until I walked into the lecture hall and realized that the faces that greeted me weren’t just professors. There were students there. Young people. And they wanted to hear from me. The things that made me an oddball major in my day made me approachable to them. There were other oddballs on the panel too, and we were the ones who got the most questions from students, themselves feeling like oddballs, wondering what they didn’t yet know they didn’t know about their futures. The oddball answers and questions also got the most laughs.

I learned something from the Q&A too: five years after I graduated, the biology department head called an all-major, all-professor meeting. When everyone was gathered, the department head outstretched both hands like a preacher and looked out at the hushed crowd with an earnest but solemn expression (so goes the story as woven by a current professor and one of my fellow panel oddballs).

“It’s official,” proclaimed the department head, “It’s all connected. It’s all. Connected.” He meant the disciplines: physics connects to chemistry connects to ecology connects to anatomy and physiology. This was the same professor who told me my jokes had no place in his classroom. These were the words that, five years prior, had set me apart as an oddball. Apparently the department also requires all its graduates to present their findings in two formats: one for scientific audiences and one for lay audiences. And humor is encouraged.

© Copyright for all texts published in Stillpoint Magazine are held by the authors thereof, and for all visual artworks by the visual artists thereof, effective from the year of publication. Stillpoint Magazine holds copyright to all additional images, branding, design and supplementary texts across stillpointmag.org as well as in additional social media profiles, digital platforms and print materials. All rights reserved.

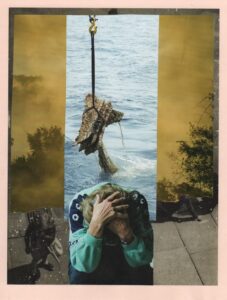

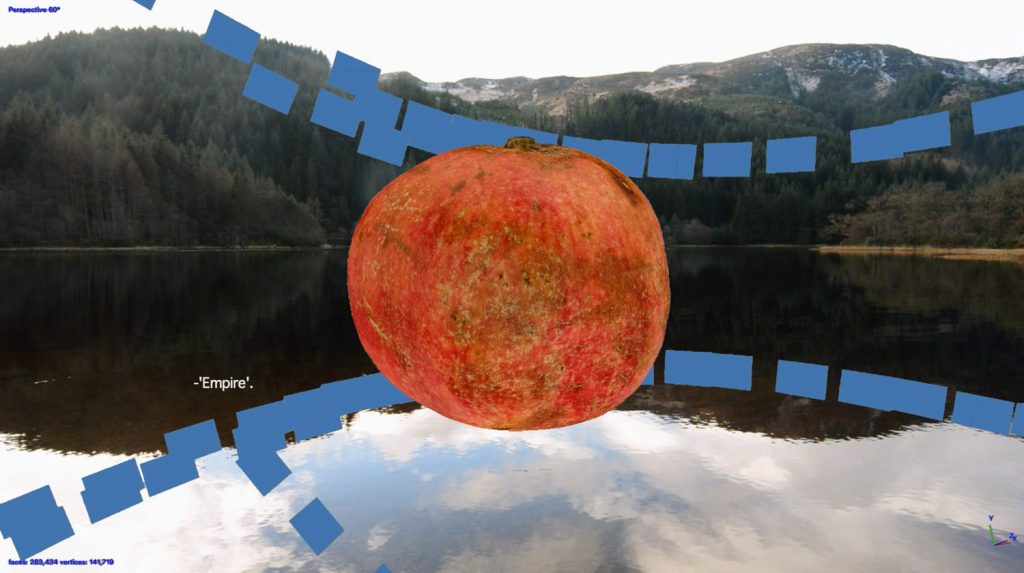

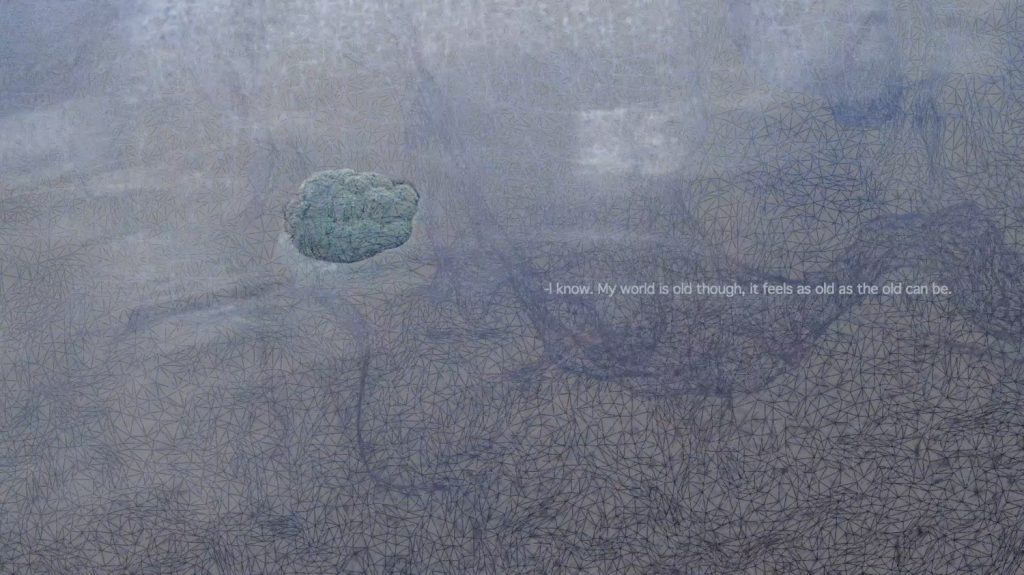

AMATEUR BOTANIST

Amateur Botanist combines photogrammetry generated 3D models and underwater video footage to imagine and re-enact some ways the plants may have traveled and spread around the planet. It hints at imperial expansions and is based on a narrated dialogue in between two non human species. This work explores notions of native and invasive, and tests the waters outside the anthropocentric world view.

Migrations of plants, as well as those of animals and humans, have shaped the planet, and have been affected by complex intertwined socio-political factors: wars, expansions, climate change, economy and eating habits. These factors, alongside time, also determine whether a species, an individual or a group get labeled as local, invasive, indigenous, exotic, foreign or native. Amateur Botanist observes the simplest of botanical migrations – fruits, vegetables, and roots floating across bodies of water to reach new lands and spread. A dialogue between two non-human species also includes digitally rendered forms, extending the conversation from human to non-human, to digital realm.

MAGGIE RYAN SANDFORD writer

Maggie Ryan Sandford is a writer, researcher, performer, and multimedia producer on a mission to increase educational equity and scientific literacy through the intersection of art and science. Work highlights include Smithsonian, National Geographic, McSweeney’s, ComedyCentral.com, and museums, stages, radio and television programs around the world. Research highlights include work with the National Science Foundation and National Institutes of Health. Her first book, Consider the Platypus: Evolution through Biology’s Most Baffling Beasts is out now (Hachette, 2019).

KOTRYNA ULA KILIULYTE artist

Kotryna Ula Kiliulyte is a Glasgow, Scotland, based artist working with moving image, photography, objects and print media. Their work exists at the intersection of histories and futures, fact and fiction, humour and politics. Currently exploring the theme of ecological mind, Kotryna works and exhibits internationally.

AMATEUR BOTANIST (2019): was commissioned by Centrala Space, Birmingham for Digital Diaspora exchange exhibition, curated by Aly Grimes. Made with support from Lithuanian Culture Council.

© Copyright for all texts published in Stillpoint Magazine are held by the authors thereof, and for all visual artworks by the visual artists thereof, effective from the year of publication. Stillpoint Magazine holds copyright to all additional images, branding, design and supplementary texts across stillpointmag.org as well as in additional social media profiles, digital platforms and print materials. All rights reserved.